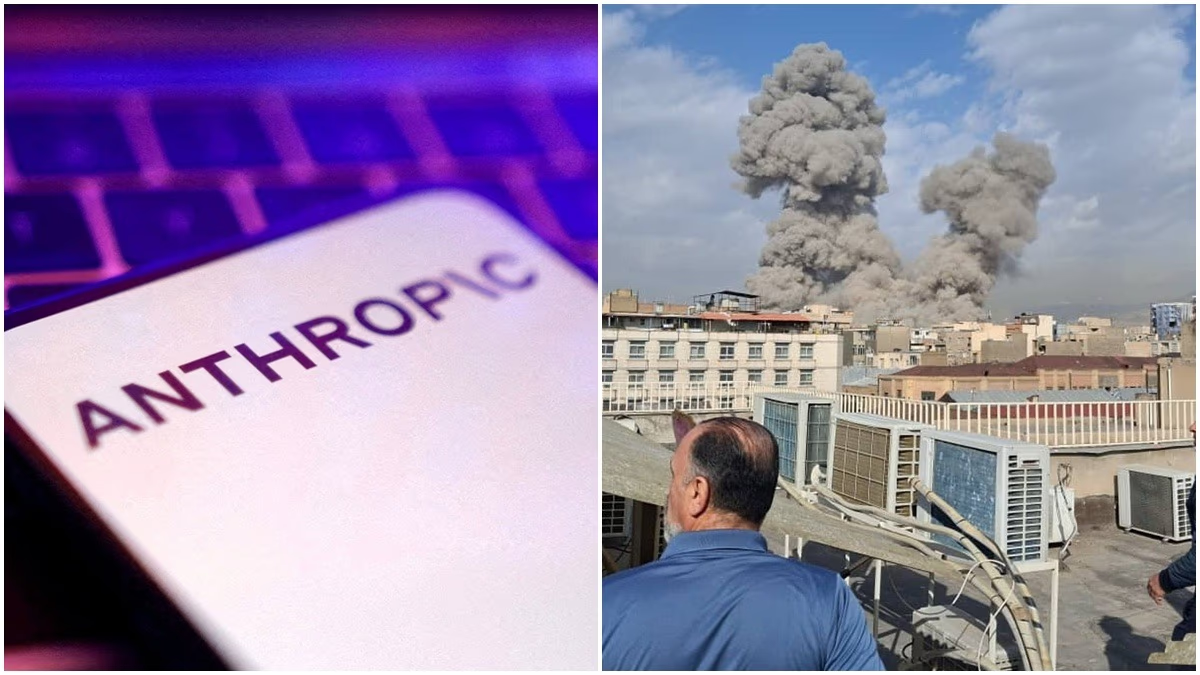

Amidst the recent strikes on Iran, the buzz is more about the role of Artificial Intelligence (AI) in the operation.

The spotlight here is on Anthropic's AI tool, Claude. Reports indicate that just as President Donald Trump instructed federal agencies to abandon Anthropic's technology, the US military employed Claude AI for the strikes on Iran.

The Department of War and Anthropic Partnership

For some time, a strategic partnership existed between the Pentagon and Anthropic, supplying AI tools to the US Department of War. This deal has now crumbled, paving the way for a collaboration with OpenAI, founded by Sam Altman.

This scenario presents a complexity: a ban on one hand, and its operational use in warfare on the other, igniting controversy.

What is Claude AI, and Why Did Trump Ban It?

Claude AI is more than just a chatbot. It’s a comprehensive language model capable of analyzing massive data sets comprehensively.

It was utilized within the American defense system for interpreting intelligence reports, analyzing satellite imagery, and prioritizing potential threats.

During the Iranian airstrikes, Claude-based analytics tools were used. This AI does not directly attack but advises military officials on critical targets, regional risks, and operational impacts.

Human decision-makers have the final say, yet AI accelerates the decision-making process.

Conflict Between Pentagon and Anthropic

Recently, tension arose between the Pentagon and Anthropic. The defense department wished Claude to be used more broadly across military functions.

However, Anthropic asserted its technology should not be used for autonomous weapons or widespread surveillance, having set ethical boundaries for its AI.

In this friction, President Trump directed federal agencies to phase out Anthropic’s technology, with some reports indicating the company as a supply chain risk.

This dispute highlights a shifting era where AI has become integral to national security. Tasks that once took hours are now being completed in minutes. But the critical question remains: if there’s an error in AI-based analysis, who bears the responsibility?

The Claude AI and Iran strike debate has sparked major discussions in the US. The balance between tech companies' ethical limits and government security needs will shape how deeply warfare and AI become intertwined in the future.

The Full Story of Claude AI’s Role in the Iran Strike

Reports suggest that the US military leveraged Anthropic's Claude AI, not for direct assault but for pre-strike preparation and analysis.

Understanding this distinction is crucial. Claude is not a missile system. It’s an advanced AI model that interprets vast data as human-readable insights.

Before such operations, militaries access varied information — satellite images, drone footage, intercepted communications, field reports, and intelligence files. Examining these manually could take hours, but AI like Claude processes this information in minutes.

The Specialized Use of Claude-Based Systems

According to reports, three primary functions were served by the Claude system.

Firstly, swift intelligence data analysis, indicating areas of increased activity, weapon movement, and threat alerts. Secondly, prioritizing potential targets, where AI suggests which target might be more significant based on the data.

Thirdly, assessing various scenarios: What could be the potential impact if a specific location is targeted? How much risk is involved, and will the civilian infrastructure be safe?

It's vital to clarify that humans ultimately made critical decisions. AI provided options and analysis. No machine pressed the button, though decisions were expedited.

This situation fueled debate because if an AI system is already integrated within military networks and much data analysis relies on it, abruptly halting it isn't simple. Reports say Claude was already part of several security systems, making its complete withdrawal during the Iran operation impractical.

Another aspect is AI's role was not just in target identification but in assessing potential damages: which areas might have civilian presence, the blast direction, and essential structures to protect. Quick data processing becomes crucial for military officials in such scenarios.

This entire conflict emphasized the modern warfare shift where data emerges as the real power. The faster a nation can interpret data, the quicker it can decide. Hence, AI is no longer just a tech company product but a crucial component of national security.